Services description#

This section provides more details on functions of the LP services.

Databases can be omitted in the following figures.

See the table with the resource consumption of each of the services listed below in the "Resource consumption by services" section.

General information about services#

Worker processes#

For LUNA PLATFORM services, you can set the number of workers to use additional resources and system memory to process requests to the service. A service will automatically spin up multiple processes and route traffic between the processes.

When starting the service in a Docker container, the number of workers is set using the WORKER_COUNT parameter.

For example, if you set the value WORKER_COUNT=2 for the Faces service, then the service will consume 2 times more resources and memory.

Note the number of available cores on your server when utilizing this feature.

Worker processes utilization is an alternative way for linear service scaling. It is recommended to use additional worker processes when increasing the number of service instances on the same server.

It is not recommended to use additional worker processes for the Remote SDK service when it utilizes GPU. Problems may occur if there is not enough GPU memory, and the workers will interfere with each other.

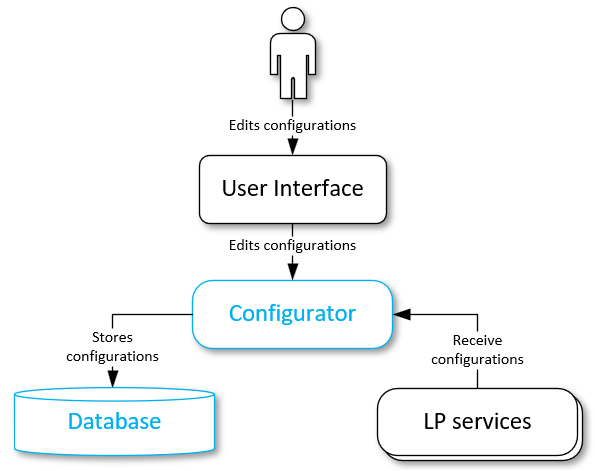

Automatic configurations reload#

LP services support the auto-reload of configurations. When a setting is changed, it is automatically updated for all the instances of the corresponding services. When this feature is enabled, no manual restart of services is required.

This feature is available for all the settings provided for each Python service. You should enable the feature manually upon each service launching. See the "Enable automatic configuration reload" section.

Starting with version 5.5.0 the configuration reload for Faces and Python Matcher services is done mostly by restarting appropriate processes.

Restrictions#

Service can work incorrectly while new settings are being applied. It is strongly recommended not to send requests to the service when you change important settings (DB setting, work plugins list, and others).

New settings appliance may lead to service restart and caches resetting (e. g., Python Matcher service cache). For example, the default descriptor version changing will lead to the LP restart. Changing the logging level does not cause service restart (if a valid setting value was provided).

Enable automatic configuration reload#

You can enable this feature by specifying a --config-reload option in the command line. In Docker containers, the feature is enabled using the "RELOAD_CONFIG" option.

You can specify the configurations check period in the --pulling-time command line argument. The value is set to 10 seconds by default. In Docker containers, the feature is enabled using the "RELOAD_CONFIG_INTERVAL" option.

Configurations update process#

LP services periodically receive settings from the Configurator service or configuration files. It depends on the way of configurations receiving for a particular service.

Each service compares its existing settings with the received settings:

-

If service settings were changed, they will pulled and applied.

-

If the configurations pulling has failed, the service will continue working without applying any changes to the existing configurations.

-

If check connections with new settings have failed, the service will retry new configurations pulling after 5 seconds. The service will shut down after 5 failed attempts.

-

-

If current settings and new pulled settings are the same, the Configurator service will not perform any actions.

Database migration execution#

You should execute migration scripts to update your database structure when upgrading to new LP builds. By default, migrations are automatically applied when running db_create script.

This method may be useful when you need to rollback to the previous LUNA PLATFORM build or upgrade the database structure without changing the stored data. Anyway, it is recommended to create the backup of your database before applying any changes.

You can run migrations from a container or use a single command.

Single command#

The example is given for the Tasks service.

docker run \

-v /etc/localtime:/etc/localtime:ro \

-v /tmp/logs/tasks:/srv/logs \

--rm \

--network=host \

dockerhub.visionlabs.ru/luna/luna-tasks:v.3.22.22 \

alembic -x luna-config=http://127.0.0.1:5070/1 upgrade head

Running from container#

To run migrations from a container follow these steps (the example is given for the Configurator service):

-

Go to the service docker container. See the "Enter container" section in LP 5 installation manual.

-

Run the migrations.

For most of the services, the configuration parameters should be received from the Configurator service and the command is the following:

alembic -x luna-config=http://127.0.0.1:5070/1 upgrade head

-x luna-config=http://127.0.0.1:5070/1 — Specifies that the configuration parameters for migrations should be received from the Configurator service.

For the Configurator service the parameters are received from "srv/luna_configurator/configs/config.conf" file.

You should use the following command for the Configurator service:

alembic upgrade head

- Exit the container. The container will be removed after you exit.

exit

API service#

LUNA API is a facial recognition web service. It provides a RESTful interface for interaction with other LUNA PLATFORM services.

Using the API service you can send requests to other LP services and solve the following problems:

-

Images processing and analysis:

-

Face/body detection in photos.

-

Face attributes (age, gender, ethnicity) and face parameters (head pose, emotions, gaze direction, eyes attributes, mouth attributes) estimation.

-

Body parameters (age, gender, accessories, headwear, colors of upper and lower clothing, type of sleeves) estimation.

-

-

Search for similar faces/bodies in the database.

-

Storage of the received face attributes in databases.

-

Creation of lists to search in.

-

Statistics gathering.

-

Flexible request management to meet user data processing requirements.

Remote SDK service#

The Remote SDK service is used to:

- Perform face detection and face parameters estimation.

- Perform body detection and body parameters estimation.

- Create samples.

- Perform extraction of basic attributes and descriptor, including aggregated ones.

- Process images using handlers and verifiers policies.

Face, body detection, descriptor extraction, estimation of parameters and attributes are performed using neural networks. The algorithm evolves with time and new neural networks appear. They may differ from each other by performance and precision. You should choose a neural network following the business case of your company.

Remote SDK with GPU#

Remote SDK service can utilize GPU instead of CPU for calculations. A single GPU is utilized per Remote SDK service instance.

Attributes extraction on the GPU is engineered for maximum throughput. The input images are processed in batches. This reduces computation cost per image but does not provide the shortest latency per image.

GPU acceleration is designed for high load applications where request counts per second consistently reach thousands. It won’t be beneficial to use GPU acceleration in non-extensively loaded scenarios where latency matters.

Aggregation#

Based on all images transferred in one request, a single set of basic attributes and an aggregated descriptor can be obtained. In addition, during the creation of the event, the aggregation of the received values of Liveness, emotions, medical mask states for faces and upper/lower body, gender, age and the body accessories for bodies is performed.

The matching results are more precise for aggregated descriptor. It is recommended to use aggregation when several images were received from the same camera. It is not guaranteed that aggregated descriptors provide improvements in other cases.

It is considered that each parameter is aggregated from sample. Use the "aggregate_attributes" parameter of the "extract attributes" (only for faces) and "sdk" requests to enable attributes aggregation. Aggregation of liveness, emotion, and mask states for faces and upper body, gender, age and the body accessories for bodies is available using the "aggregate_attributes" parameter in the "generate events", provided that these parameters were estimated earlier in the handler, as well as in the "sdk" request.

An array of "sample_ids" is returned in the response even if there was only a single sample used in the request. In this case, a single sample ID is included in the array.

Descriptor formats#

LUNA PLATFORM supports the following descriptor formats:

|

Descriptor format |

File content |

Size |

|---|---|---|

|

SDK |

Set of bytes (descriptor itself). |

Size depends on neural network version (see "Neural networks" section). |

|

Set of bytes indicating the version. |

Size is 4 bytes. |

|

|

Set of signature bytes. |

Size is 4 bytes. |

|

|

Raw |

Set of bytes (descriptor itself) encoded in Base64. |

Size depends on neural network version (see "Neural networks" section). |

|

XPK files |

Files that store descriptor in SDK format. |

Depends on the number of descriptors inside the file. |

Note: Raw and XPK files are deprecated. It is recommended to work with the SDK format.

SDK and Raw formats can be directly linked to a face or stored in a temporary attribute (see "Create objects using external data" below).

In most extraction requests, the descriptor is saved to the database as set of bytes, without being returned in the response body.

There are several requests that can be used to get descriptor in SDK format:

- request "sdk";

- request "get temporary attributes and "get temporary attribute.

With LUNA PLATFORM, it is not possible to get descriptors in Raw and SDK formats. You can use other VisionLabs software to get these formats (eg LUNA SDK). Descriptors obtained using the above resources or using the VisionLabs software are referred to as raw descriptors.

Use raw descriptors for matching

The descriptor formats described above can be used in requests for the use of raw descriptors.

An external raw descriptor can be used as reference in the following resources:

- "/matcher/faces"

- "/matcher/bodies"

- "/matcher/raw"

- "/handlers/{handler_id}/events" when "multipart/form-data" request body schema is set

- "/verifiers/{verifier_id}/verifications" when "multipart/form-data" request body schema is set

- "/verifiers/{verifier_id}/raw"

An external raw descriptor can be used as a candidate in the following resources:

Create objects using external data#

You can create a temporary attribute of face by sending basic attributes and descriptors to LUNA PLATFORM. Thus you can store this data in external storage and send it to LP for the processing of requests only.

You can create an attribute or face using:

- Basic attributes and their samples.

- Descriptors (raw descriptor in Base64 or SDK descriptor in Base64).

- Both basic attributes and descriptors with the corresponding data.

Samples are optional and are not required for an attribute or face creation.

See the "create temporary attribute" request and "create face request" for details.

Checking images for compliance with standards#

The Remote SDK service enables you to check images according to the ISO/IEC 19794-5:2011 standard or user-specified thresholds using three ways:

- Request "iso".

- Parameter "estimate_face_quality" of the request "detect faces".

- Group of parameters "face_quality" of the policy "detect_policy" of the request "generate events".

For example, it is necessary to check whether the image is of a suitable format, specifying the "JPEG" and "JPEG2000" formats as a satisfactory condition. If the image fits this condition, the system will return the value "1", if the format of the processed image is different from the specified condition, the system will return the value "0". If the conditions are not set, the system will return the estimated value of the image format.

The list of estimations and checks performed is described in the "Image check" section.

The ability to perform check and estimation of image parameters is regulated by a special parameter in the license file.

Enable/disable several estimators and detectors#

By default, the Remote SDK service is launched with all estimators and detectors enabled. If necessary, you can disable the use of some estimators or detectors when launching the Remote SDK container. Disabling unnecessary estimators enables you to save RAM or GPU memory, since when the Remote SDK service launches, the possibility of performing these estimates is checked and neural networks are loaded into memory.

If you disable the estimator or detector, you can also remove its neural network from the Remote SDK container.

Disabling estimators or detectors is possible by transferring documents with the names of estimators to the launch command of the Remote SDK service. Arguments are passed to the container using the "EXTEND_CMD" variable.

List of available estimators:

| Argument | Description |

|---|---|

| --enable-all-estimators-by-default | enable all estimators by default |

| --enable-human-detector | simultaneous detector of bodies and bodies |

| --enable-face-detector | face detector |

| --enable-body-detector | body detector |

| --enable-people-count-estimator | people count estimator |

| --enable-face-landmarks5-estimator | face landmarks5 estimator |

| --enable-face-landmarks68-estimator | face landmarks68 estimator |

| --enable-head-pose-estimator | head pose estimator |

| --enable-deepfake-estimator | Deepfake estimator |

| --enable-liveness-estimator | Liveness estimator |

| --enable-fisheye-estimator | FishEye effect estimator |

| --enable-face-detection-background-estimator | image background estimator |

| --enable-face-warp-estimator | face sample estimator |

| --enable-body-warp-estimator | body sample estimator |

| --enable-quality-estimator | image quality estimator |

| --enable-image-color-type-estimator | face color type estimator |

| --enable-face-natural-light-estimator | natural light estimator |

| --enable-eyes-estimator | eyes estimator |

| --enable-gaze-estimator | gaze estimator |

| --enable-mouth-attributes-estimator | mouth attributes estimator |

| --enable-emotions-estimator | emotions estimator |

| --enable-mask-estimator | mask estimator |

| --enable-glasses-estimator | glasses estimator |

| --enable-eyebrow-expression-estimator | eyebrow estimator |

| --enable-red-eyes-estimator | red eyes estimator |

| --enable-headwear-estimator | headwear estimator |

| --enable-basic-attributes-estimator | basic attributes estimator |

| --enable-face-descriptor-estimator | face descriptor extraction estimator |

| --enable-body-descriptor-estimator | body descriptor extraction estimator |

| --enable-body-attributes-estimator | body attributes estimator |

You can explicitly specify which estimators and detectors are enabled or disabled by passing the appropriate arguments to the "EXTEND_CMD" variable, or you can enable (by default) or disable them all with the enable-all-estimators-by-default argument. You can turn off the use of all estimators and detectors, and then turn on specific estimators by passing the appropriate arguments.

Example of a command to start the Remote SDK service using only a face detector and estimators of a face sample and emotions.

docker run \

...

--env=EXTEND_CMD="--enable-all-estimators-by-default=0 --enable-face-detector=1 --enable-face-warp-estimator=1 --enable-emotions-estimator=1" \

...

Handlers service#

The Handlers service is used to create and store handlers and verifiers.

The data of handlers and verifiers are stored in the Handlers database.

Send events to third-party service#

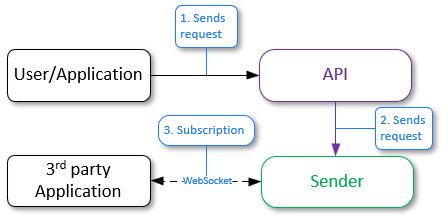

LUNA PLATFORM provides the ability to send notifications via web sockets or web hooks (HTTP). This is facilitated by the "callbacks" policy.

Sending notifications via web sockets

The "callbacks" policy with the luna-ws-notification parameter provides a notification mechanism based on WebSocket principles. This type of callback allows receiving events through web sockets from the Sender service, which interacts with the Handlers service via the pub/sub mechanism through the Redis channel. This ensures direct, instant data updates using a bidirectional communication channel between the client and server.

Advantages:

- Direct, instant data updates via web sockets.

- Efficient use of an open bidirectional channel.

- Low latency in notification delivery.

In previous versions of LUNA PLATFORM, the "notification_policy" was used. It is now considered deprecated and is not recommended for use. The main advantage of the callback mechanism over the deprecated "notification_policy" is the ability to specify multiple callbacks with different filters in the handler creation request, resulting in only one event being sent.

See detailed information in the "Sender service" section.

Sending notifications via web hooks

The "callbacks" policy with the http parameter provides a notification mechanism based on webhook principles for HTTP. They ensure asynchronous interaction between systems, allowing external services to react to the occurrence of events. Within this policy, you can specify specific parameters such as protocol type, external system address, and authorization parameters and data.

Advantages:

- More flexible notification setup mechanism.

- Easy integration with various external systems.

- Uses familiar HTTP protocols and configurations.

Image Store service#

The Image Store service stores the following data:

- Face and body samples. Samples are stored in Image Store by the Remote SDK service or you can save an external sample using the "samples" > "detect faces" and "samples" > "save face/body sample" requests.

- Reports about tasks. Reports are stored by the Tasks service workers.

- Any objects loaded using the "create objects" request.

- Clusterization information.

Image Store can save data either on a local storage device or in S3-compatible cloud storage (Amazon S3, etc.).

Buckets description#

The data is stored in special directories called buckets. Each bucket has a unique name. Bucket names should be set in lower case.

The following buckets are used in LP:

- "visionlabs-samples". This bucket stores face samples.

- "visionlabs-bodies-samples". This bucket stores human bodies samples.

- "visionlabs-image-origin". This bucket stores source images.

- "visionlabs-objects". This bucket stores objects.

- "task-result". This bucket stores the results received after tasks processing using the Tasks service.

- "portraits". This bucket stores portraits. The bucket is required for the usage of Backport 3 service.

Buckets creation is described in LP 5 installation manual in the "Buckets creation" section.

After running the Image Store container and the commands for containers creation, the buckets are saved to local storage or S3.

By default, local files are stored in the "/var/lib/luna/current/example-docker/image_store" directory on the server. They are saved in the "/srv/local_storage/" directory in the Image Store container.

Bucket includes directories with samples or other data. The names of the directories correspond to the first four letters of the sample ID. All the samples are distributed to these directories according to their first four ID symbols.

Next to the bucket object is a "*.meta.json" file containing the "account_id" used when performing the request. If the bucket object is not a sample (for example, the bucket object is a JSON file in the "task-result" bucket), then the "Content-Type" will also be specified in this file.

An example of the folders structure in the "visionlabs-samples", "task-result" and "visionlabs-bodies-samples" buckets is given below.

./local_storage/visionlabs-samples/8f4f/

8f4f0070-c464-460b-sf78-fac234df32e9.jpg

8f4f0070-c464-460b-sf78-fac234df32e9.meta.json

8f4f1253-d542-621b-9rf7-ha52111hm5s0.jpg

8f4f1253-d542-621b-9rf7-ha52111hm5s0.meta.json

./local_storage/task-result/1b03/

1b0359af-ecd8-4712-8fc0-08401612d39b

1b0359af-ecd8-4712-8fc0-08401612d39b.meta.json

./local_storage/visionlabs-bodies-samples/6e98/

6e987e9c-1c9c-4139-9ef4-4a78b8ab6eb6.jpg

6e987e9c-1c9c-4139-9ef4-4a78b8ab6eb6.meta.json

A significant amount of memory may be required when storing a large number of samples. A single sample takes about 30 Kbytes of the disk space.

It is also recommended to create backups of the samples. Samples are utilized when the NN version is changed or when you need to recover your database of faces.

Use S3-compatible storage#

To enable the use of S3-compatible storage, you must perform the following steps:

- Make sure that the access key has sufficient authority to access the buckets of the S3-compatible storage.

- Launch the Image Store service (see "Image Store" section in the installation manual).

- Set the "S3" value for the "storage_type" setting of the Image Store service settings.

- Fill in the settings for connecting to an S3-compatible storage (host, Access Key and Secret Key, etc.) in the "S3" section of the Image Store service settings.

- Run the script for creating buckets

lis_bucket_create.py(see the "Create buckets" section in the installation manual).

If necessary, you can disable SSL certificate verification using the "verify_ssl" setting in the "S3" section of the Image Store service settings. This enables you to use a self-signed SSL certificate.

Object TTL#

You can set the object time to live (TTL) in buckets (both local and S3). Objects mean:

- Samples of faces or bodies.

- Images or objects created in the resources "/images" or "/objects".

- Source images.

- Task results.

TTL for objects is calculated relative to the GMT time format.

TTL for objects is set in days in the following ways:

- During the creation of a bucket for all objects at once (basic TTL bucket policy).

- After creating a bucket for specific objects using requests to the corresponding resources.

The number of days is selected from the list in the corresponding requests (see below).

In addition to the number of days, the parameter can take the value "-1", meaning that objects should be stored indefinitely.

Configuring basic TTL bucket policy#

The basic TTL bucket policy can be configured in the following ways:

- Using the

--bucket-ttlflag for thelis_bucket_create.pyscript. For example,python3 ./base_scripts/lis_bucket_create.py -ii --bucket-ttl=2. - Using a request to the Image Store service. For example,

curl -X POST http://127.0.0.1:5020/1/buckets?bucket=visionlabs-samples?ttl=2.

Configuring TTL for specific objects#

TTL for specific objects can be configured using the "ttl" parameter in the following places:

- In the "storage_policy" > "face_sample_policy", "body_sample_policy" and "image_origin_policy" handler policies.

- In the requests "create object", "create images" and "save sample".

- In the requests to create tasks or schedules in the "result_storage_policy" field.

If the "ttl" parameter is not specified, then the basic policy of the bucket in which the object is located (see above) will be applied.

Adding TTL to existing objects#

You can add a TLL to an existing object using PUT requests to the /objects, /images, /samples/{sample_type} resources of the Image Store service. It is not possible to add TTL of task results to already created and executed tasks. You can add TTL of task results to an already created schedule using the request "replace tasks schedule". For tasks created or running at the time of the request, the TTL of task results will not be applied.

You can add a TTL to an existing local bucket using a PUT request to the Image Store resource /buckets.

To add a TTL for a bucket located in S3, you need to perform a migration using the "base_scripts/migrate_ttl_settings" script from the Image Store service. This is because for TTL objects in S3 is applied via tag related filters. The command to perform S3 bucket migration is given in the installation manual. See "Migration to apply TTL to objects in S3" for details on S3 bucket migration.

Supported cloud providers#

Amazon S3 cloud providers, Yandex cloud storage and MinIO are supported.

Migration to apply TTL to objects in S3#

Lifecycle customization for S3 is applied through filters associated with tags (see official documentation). This assumes that objects have a tag with a limited set of values, and buckets have a set of rules based on the value of that tag.

To add tags and rules, you must perform a migration. Migration is strictly necessary to fully apply lifecycle customization for the following reasons:

- Buckets without rules will not delete objects, even if the user specifies a lifetime for a specific object.

- Objects without tags will never be deleted, even if the user specifies a lifetime for the bucket.

You need to add the following tags and rules:

- To support TTL for buckets, you need to add a

vl-expiretag with a default value for all existing objects. - To support TTL for specific objects, you need to add a set or TTL-related lifecycle rules for existing segments:

{

"ID": "vl-expire-<ttl>",

"Expiration": {

"Days": <ttl>,

},

"Filter": {"Tag": {"Key": "vl-expire", "Value": <ttl>}},

"Status": "Enabled",

}

A set of specific tag values associated with an object's TTL is supported: 1, 2, 3, 4, 5, 6, 7, 14, 30, 60, 90, 180, 365.

The migration process consists of two stages:

- Configuration of the bucket life cycle, expanded with a set of life cycle rules related to TTL.

- Assigning a

vl-expiretag to each object in the bucket, if it does not already have one.

Assigning a tag for each object can be skipped if necessary using the -update-tags=0 argument.

See the upgrade manual for example commands to perform the migration.

Permission issues

By default, all S3 resources, including buckets, objects, and lifecycle configuration, are private. If necessary, default rules and tags can be created manually by the resource owner using one of the applicable methods. See the official S3 documentation for details.

Useful links to official documentation:

- Creating a lifecycle configuration

- Put lifecycle configuration

- Managing object tags

- Expiring objects

Expiration of TTL#

When an object's TTL comes to an end, it is marked for deletion. For local buckets, the cleanup task is performed once a day (at 01:00 am). S3 buckets use internal ttl configuration rules. To prevent conflicts or duplication of cleanup tasks when multiple instances or worker processes are involved, a locking mechanism is implemented. This ensures that only one instance or worker process is responsible for performing the local storage cleanup process.

There may be a delay between the expiration date and the date the item is actually deleted. Both for local storage and S3.

Search for expiring objects#

To find out when an object expires, you can use queries with the HEAD methods on the /objects and /images resources. These requests return X-Luna-Expiry-Date response headers, which indicate the date on which the object is no longer eligible for persistence.

External samples#

You can send an external sample to Image Store. The external sample is received using third-party software or the VisionLabs software (e. g., FaceStream).

See the POST request on the "/samples/{sample_type}" resource in "APIReferenceManual.html" for details.

The external sample should correspond to certain standards so that LP could process it. Some of them are listed in the "Sample requirements" section.

The samples received using the VisionLabs software satisfy this requirement.

In case of third-party software, it is not guaranteed that the result of the external sample processing will be the same as for the VisionLabs sample. The sample can be of low quality (too dark, blurry and so on). Low quality leads to incorrect image processing results.

Anyway, it is recommended to consult VisionLabs before using external samples.

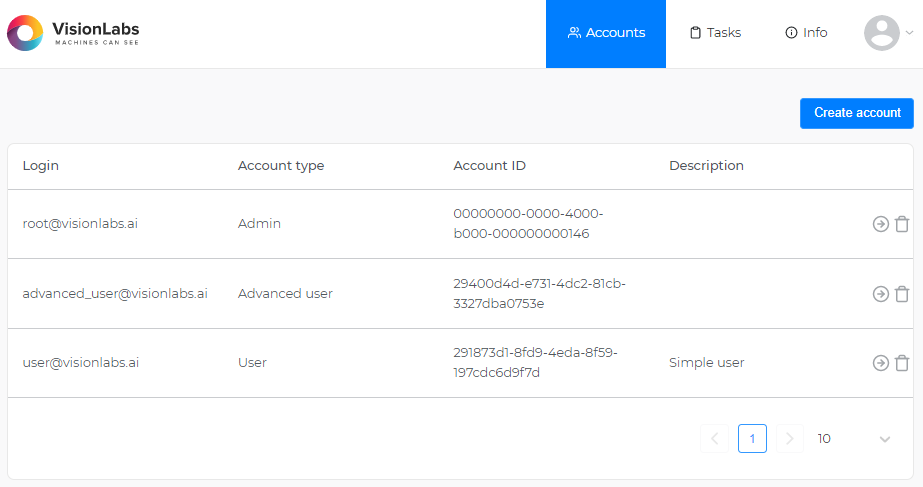

Accounts service#

The Accounts service is intended for:

- Creation, management and storage of accounts.

- Creation, management and storage of tokens and their permissions.

- Verification of accounts and tokens.

See "Accounts, tokens and authorization types" section for more information about the authorization system in LUNA PLATFORM 5.

All created accounts, tokens and their permissions are saved in the Accounts service database.

JWT Tokens algorithms#

The JWT (JSON Web Tokens) authentication mechanism supports various algorithms for token signing. This section describes the default algorithm used and the necessary steps to use an alternative algorithm.

Default algorithm#

By default, the service uses the HS256 algorithm to sign JWT tokens. If you want to use asymmetric cryptographic encryption, you can use the ES256 algorithm.

Use ES256 algorithm#

To use the ES256 algorithm, follow these steps:

-

Generate a private ECDSA key.

First, you need to generate a private ECDSA key using the

prime256v1curve. This can be done using command-line tools such as OpenSSL.Example command:

openssl ecparam -genkey -name prime256v1 -out ec_private.pemYou can also generate a key protected by a password, for example:

openssl ecparam -genkey -name prime256v1 | openssl ec -aes256 -out ec_private_enc.pem -

Encode the private key in Base64.

After generating the private key, encode it in Base64 format. This can be achieved with tools available in most operating systems.

Example command:

base64 -w0 ecdsa_private.pem > ecdsa_private_base64 -

Set the environment variable.

The encoded private key must be specified in the

ACCOUNTS_JWT_ECDSA_KEYenvironment variable when starting the container. This allows the service to use the key for signing JWT tokens with the ES256 algorithm.Additionally, if your private key is protected with a password, you can specify the password in the

ACCOUNTS_JWT_ECDSA_KEY_PASSWORDenvironment variable.Example container run command with environment variables:

docker run \ --env=CONFIGURATOR_HOST=127.0.0.1 \ --env=ACCOUNTS_JWT_ECDSA_KEY=jwt_ecdsa_key \ --env=ACCOUNTS_JWT_ECDSA_KEY_PASSWORD=ecdsa_key_password \ ...

By following these steps, the service will be able to sign JWT tokens using the ES256 algorithm, providing enhanced security through asymmetric cryptography.

Impact of changing algorithm type#

Switching the signing algorithm from HS256 to ES256 (or vice versa) has a significant impact on token validation. All existing tokens signed with the previous algorithm will become invalid after the changes are made. This happens because the token signature verification mechanism expects the structure and cryptographic base of the token to match the newly specified algorithm.

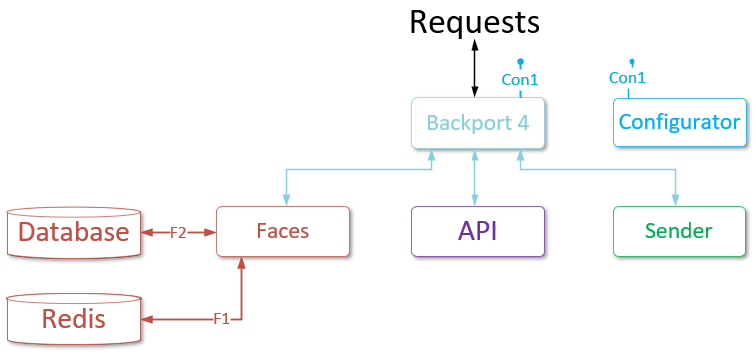

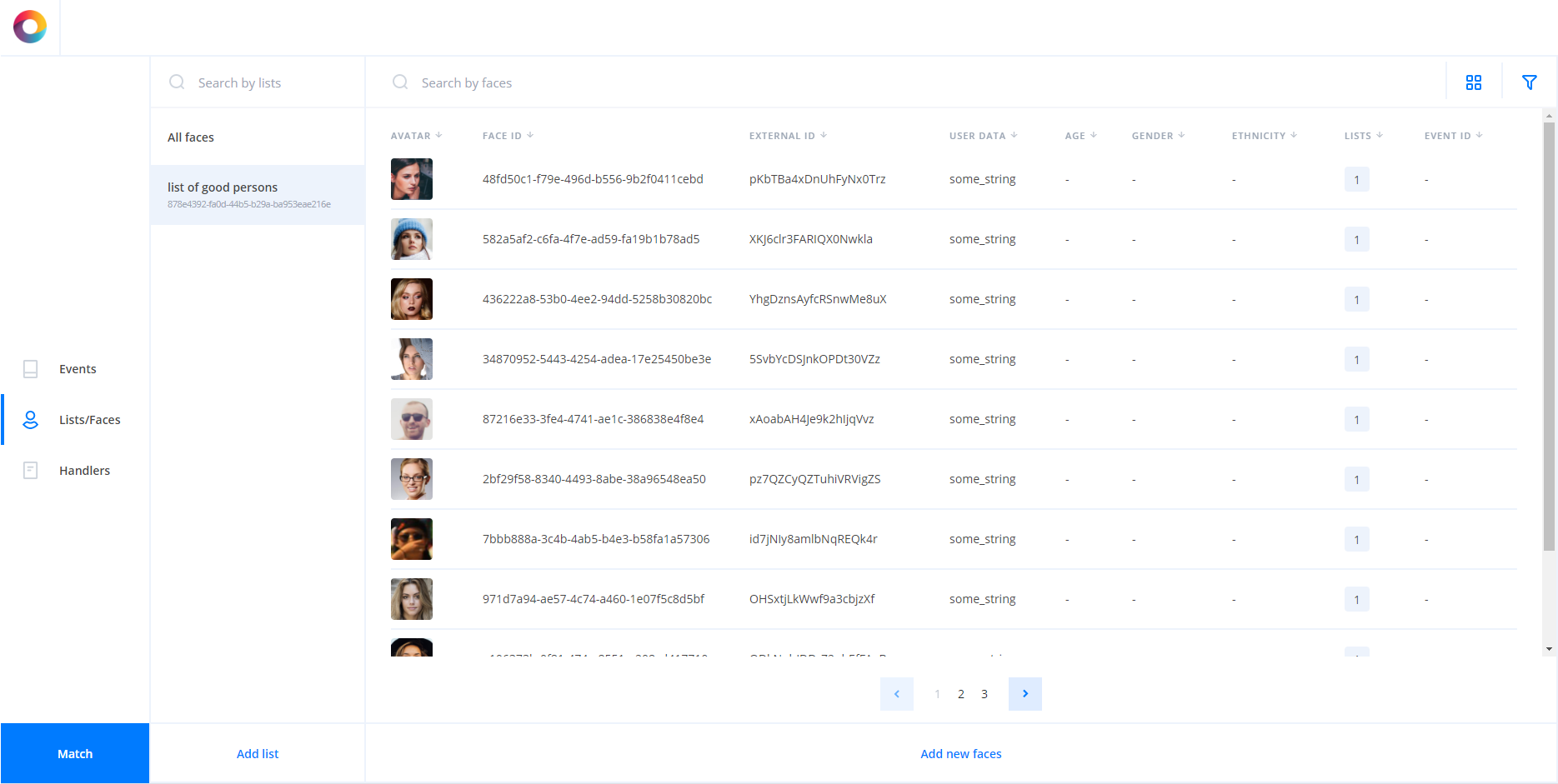

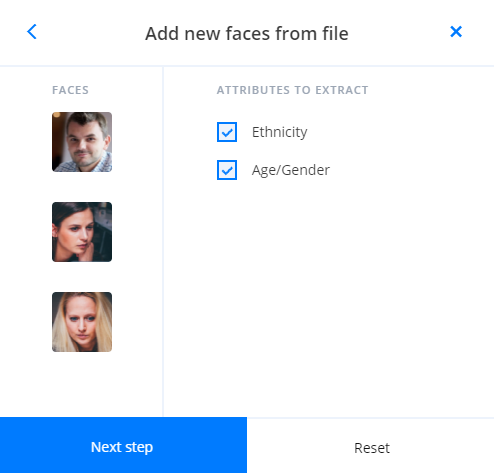

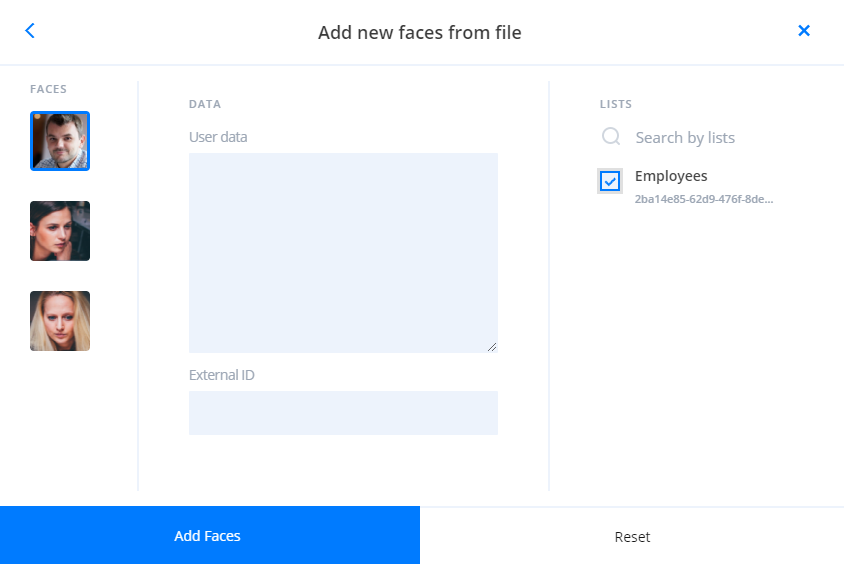

Faces service#

Faces service is used for:

- Creating temporary attributes.

- Creating faces.

- Creating lists.

- Attaching faces to lists.

- Managing of the general database that stores faces with the attached data and lists.

- Receive information about the existing faces and lists.

Matching services#

Python Matcher has the following features:

- Matching according to the specified filters. This matching is performed directly on the Faces or the Events database. Matching by DB is beneficial when several filters are set.

- Matching by lists. In this case, it is recommended that descriptors are save in the Python Matcher cache.

Python Matcher Proxy is used to route requests to Python Matcher services and matching plugins.

Python Matcher#

Python Matcher utilizes Faces DB for filtration and matching when faces are set as candidates for matching and filters for them are specified. This feature is always enabled for Python Matcher.

Python Matcher utilizes Events DB for filtration and matching when events are set as candidates for matching and filters for them are specified. The matching using the Events DB is optional, and it is not used when the Events service is not utilized.

A VLMatch matching function is required for matching by DB. It should be registered for the Faces DB and the Events DB. The function utilizes a library that should be compiled for your current DB version. You can find information about it in the installation manual in "VLMatch library compilation", "Create VLMatch function for Faces DB", and "Create VLMatch function for Events DB" sections.

Python Matcher service additionally uses workers that process requests.

Python Matcher Proxy#

The API service sends requests to the Python Matcher Proxy if it is configured in the API configuration. Then the Python Matcher Proxy service redirects requests to the Python Matcher service or to matching plugins (if they are used).

If the matching plugins are not used, then the service route requests only to the Python Matcher service. Thus, you don't need to use Python Matcher Proxy unless you intend to use matching plugins. See the "Matching plugins" section for a description of how the matching plugins work.

List caching#

When faces are specified as candidates for matching and list IDs for them are specified as filters, Python Matcher performs a matching by lists.

By default, when the Python Matcher service is launched, all descriptors in all lists are cached in its memory.

Caching is managed by the "DESCRIPTORS_CACHE" section.

The Python Matcher service will not start until it loads all available descriptors into the cache.

When executing a list matching request, the Python Matcher service automatically adds it to the queue, from where it is picked up by the worker and sent to the Cached Matcher entity to perform a matching on cached data.

After performing the matching, the worker takes the results and returns them to the Python Matcher service and the user.

This caching enabling you to significantly increase the performance of the matching.

If necessary, you can process only specific lists using the parameter "cached_data > faces_lists > include" or exclude lists using the parameter "cached_data > faces_lists > exclude". The latter is especially useful when working with the LUNA Index Module to implement the logic of processing parts of lists using Python Matcher, and parts using LIM Indexed Matcher.

For more information about LIM, see "Matching a large set of descriptors".

Workers cache#

When multiple workers are launched for the Python Matcher service, each of the workers uses the same descriptors cache.

This change can both speed up and slow down the service. If you need to ensure that the cache is stored in each of the Python Matcher workers, you should run each of the server instances separately.

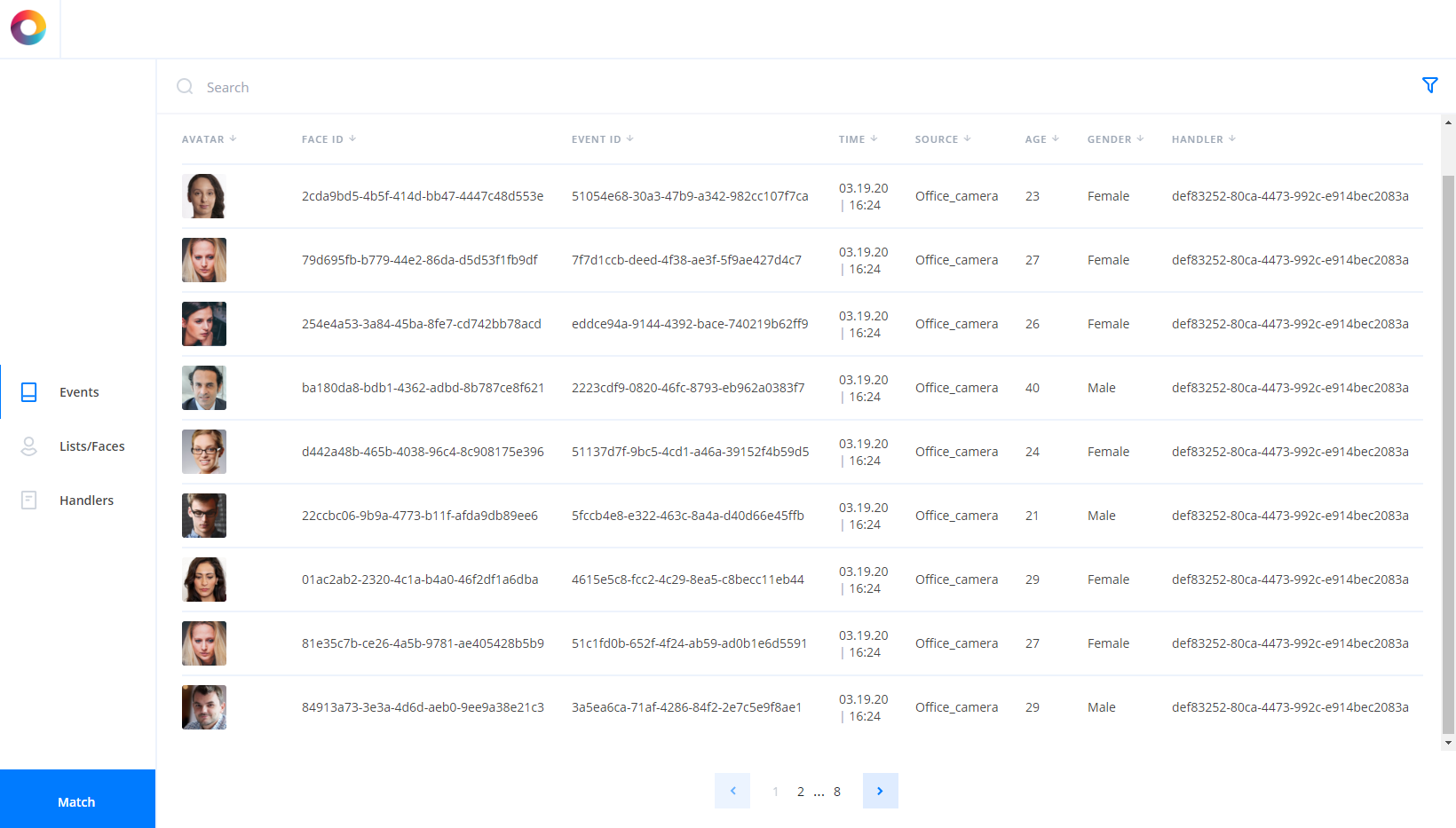

Events service#

The Events service is used for:

- Storage of all the created events in the Events database.

- Returning all the events that satisfy filters.

- Gathering statistics on all the existing events according to the specified aggregation and frequency/period.

- Storage of descriptors created for events.

As the event is a report, you can't modify already existing events.

The Events service should be enabled in the API service configuration file. Otherwise, events will not be saved to the database.

Database for Events#

PostgreSQL is used as a database for the Events service.

The speed of request processing is primarily affected by:

- The number of events in the database.

- Lack of indexes for PostgreSQL.

PostgreSQL shows acceptable requests processing speed with the number of events from 1 000 000 to 10 000 000. If the number of events exceeds 10 000 000, the request to PostgreSQL may fail.

The speed of the statistics requests processing in the PostgreSQL database can be increased by configuring the database and creating indexes.

Geo position#

You can add a geo position during event creation.

The geo position is represented as a JSON with GPS coordinates of the geographical point:

- "longitude" — Geographical longitude in degrees.

- "latitude" — Geographical latitude in degrees.

The geo position is specified in the "location" body parameter of the event creation request. See the "Create new events" section of the Events service reference manual.

You can use the geo position filter to receive all the events that occurred in the required area.

Geo position filter#

A geo position filter is a bounding box specified by coordinates of its center (origin) and some delta.

It is specified using the following parameters:

- "origin_longitude"

- "origin_latitude"

- "longitude_delta"

- "latitude_delta"

The geo position filter can be used when you get events, get statistics on events, and perform events matching.

Geo position filter is considered as properly specified if:

- both "origin_longitude" and "origin_latitude" are set.

- neither "origin_longitude", "origin_latitude", "longitude_delta", or "latitude_delta" is set.

If both "origin_longitude" and "origin_latitude" are set and "longitude_delta" is not set — the default value is applied (see the default value in the OpenAPI documentation).

Read the following recommendations before using geo position filters.

The general recommendations and restrictions for geo position filters are:

- Do not create filters with a vertex or a border on the International Date Line (IDL), the North Pole or the South Pole. They are not fully supported due to the features of database spatial index. The filtering result may be unpredictable.

- Geo position filters with edges more than 180 degrees long are not allowed.

- It is highly recommended to use the geo position filter citywide only. If a larger area is specified, the filtration results on the borders of the area can be unexpected due to the spatial features.

- Avoid creating a filter that is too extended along longitude or latitude. It is recommended to set the values of deltas close to each other.

The last two recommendations exist due to the spatial features of the filter. According to these features, when one or two deltas are set to large values, the result may differ from the expected though it will be correct. See the "Filter features" section for details.

Filter performance#

Geo position filter performance depends on the spatial data type used to store event geo position in the database.

Two spatial data types are supported:

- GEOMETRY. Spatial object with coordinates expressed as (longitude, latitude) pairs, defined in the Cartesian plane. All calculations use Cartesian coordinates.

- GEOGRAPHY. Spatial object with coordinates expressed as (longitude, latitude) pairs, defined as on the surface of a perfect sphere, or a spatial object in the WGS84 coordinate system.

For a detailed description, see geometry vs geography.

Geo position filter is based on the ST_Covers PostGIS function supported for both Geometry and Geography type.

Filter features#

Geo position filter has some features caused by PostGIS.

When geography type is used and the geo position filter covers a wide portion of the planet surface, filter result may be unexpected but geographically correct due to some spatial features.

The following example illustrates this case.

An event with the following geo position was added in the database:

{

"longitude": 16.79,

"latitude": 64.92,

}

We apply a geo position filter and try to find the required point on the map. The filter is too extended along the longitude:

{

"origin_longitude": 16.79,

"origin_latitude": 64.92,

"longitude_delta": 2,

"latitude_delta": 0.01,

}

This filter will not return the expected event. The event will be filtered due to spatial features. Here is the illustration showing that the point is outside the filter.

You should consider this feature to create a correct filter.

For details, see Geography.

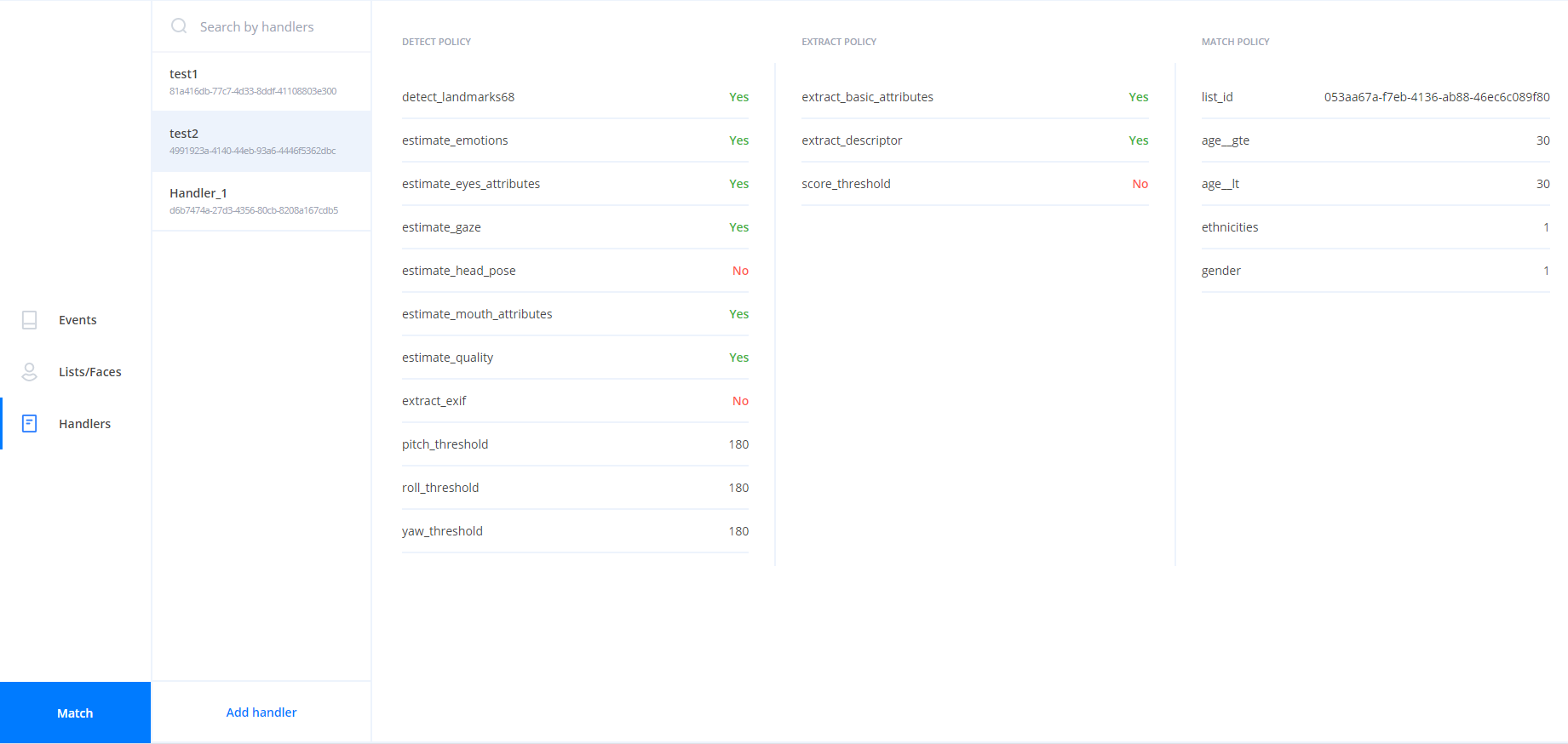

Events creation#

Events are created using handlers. Handlers are stored in the Handlers database. You should specify the required handler ID in the event creation request. All the data stored in the event will be received according to the handler parameters.

You should perform two separate requests for event creation.

The first request creates a handler. Handler includes policies that describes how the image is processed hence defining the LP services used for the processing.

The second request creates new events using the existing handler. An event is created for each image that has been processed.

You can specify the following additional data for each event creation request:

- external ID (for created faces)

- user data (for created faces)

- source (for created events)

- tags (for created events)

The handler is processed policy after policy. All the data from the request is processed by a policy before going to the next policy. The "detect" policy is performed for all the images from the request, then "multiface" policy is applied, then the "extract" policy is performed for all the received samples, etc. For more information about handlers, see the "Handlers description" section.

Events meta-information#

If any additional data needs to be stored along with the event, the "meta" field should be used. The "meta" field stores data in the JSON format. The total size of the data stored in the "meta" field for one event cannot exceed 2 MB. It is assumed that with the help of this functionality, the user will create his own data model (event structure) and will use it to store the necessary data.

Note that you cannot specify field names with spaces in the "meta" field.

Data in the "meta" field can be set in the following ways:

- In the "generate events" request body with the content type

application/jsonormultipart/form-data. - In the "save event" request body.

- Using a custom plugin or client application.

In the "generate events" request body, it is possible to set the "meta" field both for specific images and for all images at once (mutual meta-information). For requests with aggregation enabled, only mutual meta-information will be used for the aggregated event, and meta-information for specific images will be ignored. See the detailed information in the "generate events" request body in the OpenAPI specification.

Example of recording the "meta" field:

{

"meta": {

"user_info": {

"temperature": 36.6

}

}

}

In order to store multiple structures, it is necessary to explicitly separate them to avoid overlapping fields. For example, as follows:

{

"struct1": {

...

},

"struct2": {

...

}

}

Search by "meta" field#

You can get the contents of the "meta" field using the appropriate filter in the "get events" request.

The filter should be entered using a specific syntax — meta.<path.to.field>__<operator>:<type>, where:

meta.— An indication that the "meta" field of the Events database is being accessed.<path.to.field>— Path to the object. A dot (.) is used to navigate nested objects. For example, in the string{"user_info":{"temperature":"36.6"}}to refer to thetemperatureobject, use the following filtermeta.user_info.temperature.__<operator>— One of the following operators —eq(default),neq,like,nlike,in,nin,gt,gte,lt,lte. For example,meta.user_info.temperature__gte;:type— one of the following data types —string,integer,numeric. For example,meta.user_info.temperature__gte:numeric.

For each operator, the use of certain data types is available. See the table of operator dependency on data types in the OpenAPI specification.

If necessary, you can build an index to improve the search. See the Events developer manual for details on building an index.

Important notes#

When working with the "meta" field, remember the following:

- you need to keep data consistent with given schemes; in case of a mismatch, PostgreSQL will not allow inserting a row with a type that cannot be added to the existing index (if any);

- if necessary, you can migrate data;

- if necessary, you can build an index;

- specify the data type when performing a request (by default, all values are assumed to be strings);

- you need to pay attention to the names of the fields; fields to be filtered by must not contain reserved keywords like

:int, double underscores, special symbols, and so on.

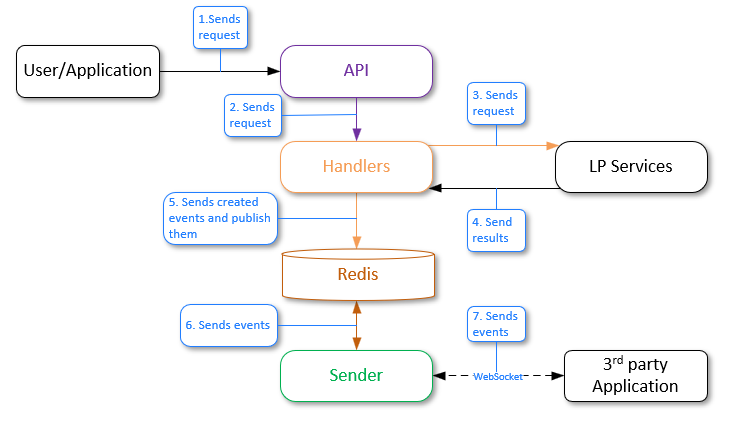

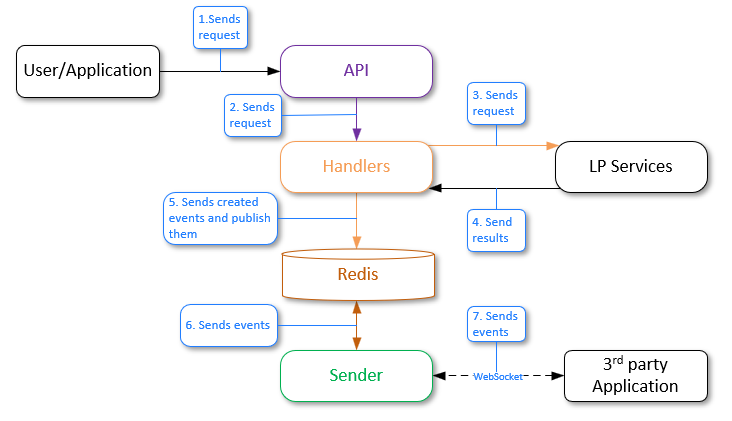

Sender service#

The Sender service is an additional service that is used to send events via web sockets. This service communicates with the Handlers service (in which events are created) through the pub/sub mechanism via the Redis DB channel.

If necessary, you can send notifications over the HTTP protocol. See the "Send events to third-party service" section for more details.

Events are created based on handlers. To receive notifications, the "callbacks" policy with "luna-ws-notification" must be enabled. This policy has filters that enable you to send notifications only under certain conditions, for example, to send only if the candidate is very similar to the reference (the "similarity__lte" parameter).

In previous versions of LUNA PLATFORM, the "notification_policy" was used. It is now considered deprecated and is not recommended for use. The main advantage of the callback mechanism over the deprecated "notification_policy" is the ability to specify multiple callbacks with different filters in the handler creation request, resulting in only one event being sent.

You should configure web sockets connection using special request. It is recommended create web sockets connection using the "/ws" resource of the API service. You can specify filters (query parameters) in the request, i.e. you can configure the Sender service to receive only certain events. See OpenAPI specification for detailed information about the configuration of creating a connection to a web socket.

Configuring web sockets directly via Sender is also available (see "/ws" of the Sender service). It can be used to reduce the load on the API service.

When an event is created it can be:

-

Saved to the Events database. The Events service should be enabled to save an event.

-

Returned in the response without saving to the database.

In both cases, the event is sent via the Redis DB channel to the Sender service.

In this case, the Redis DB acts as a connection between Sender and Handlers services and does not store transferred events.

The Sender service is independent of the Events service. Events can be sent to Sender even if the Events service is disabled.

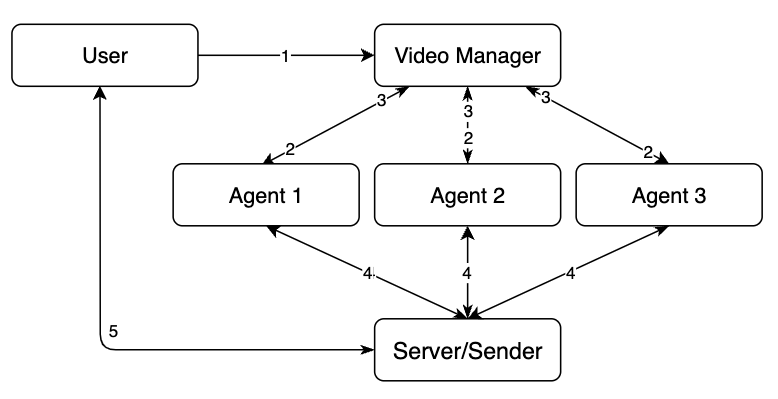

Creating handlers and specifying filters for sending notifications

- The user sends the "create handler" request to the API service, where it enables the "callbacks" and sets filters according to which events will be sent to the Sender service.

- The API service sends a request to the Handlers service.

- The Handlers service sends a response to the API service.

- The API service sends the "handler_id" to the user.

The user saves the ID "handler_id", which is necessary for creating events.

Activation of subscription to events and filtering of their sending

- The user or application sends a request "ws handshake" to the API service and sets filters through which it will be possible to filter the received data from the Handlers service.

- The API service sends a request to the Sender service.

- The Sender service establishes a connection via web sockets with the user application.

Now, when an event is generated, it will be automatically redirected to the Sender service (see below) in accordance with the specified filters.

Event generation and sending to Sender

The general workflow is as follows:

- A user or an application sends the "generate events" request to the API service.

- The API service sends the request to the Handlers service.

- The Handlers service sends requests to the corresponding LP services.

- LP services process the requests and send results. New events are created.

- The Handlers service sends an event to the Redis database using the pub/sub model. Redis has a channel to which the Sender service is subscribed, and it is waiting for messages to be received from this channel.

- Redis sends the received events to Sender by the channel.

- Third-party party applications should be subscribed to the Sender service via web sockets to receive events. If there is a subscribed third-party party application, Sender sends events to it according to the specified filters.

See the OpenAPI documentation for information about the JSON structure returned by the Sender service.

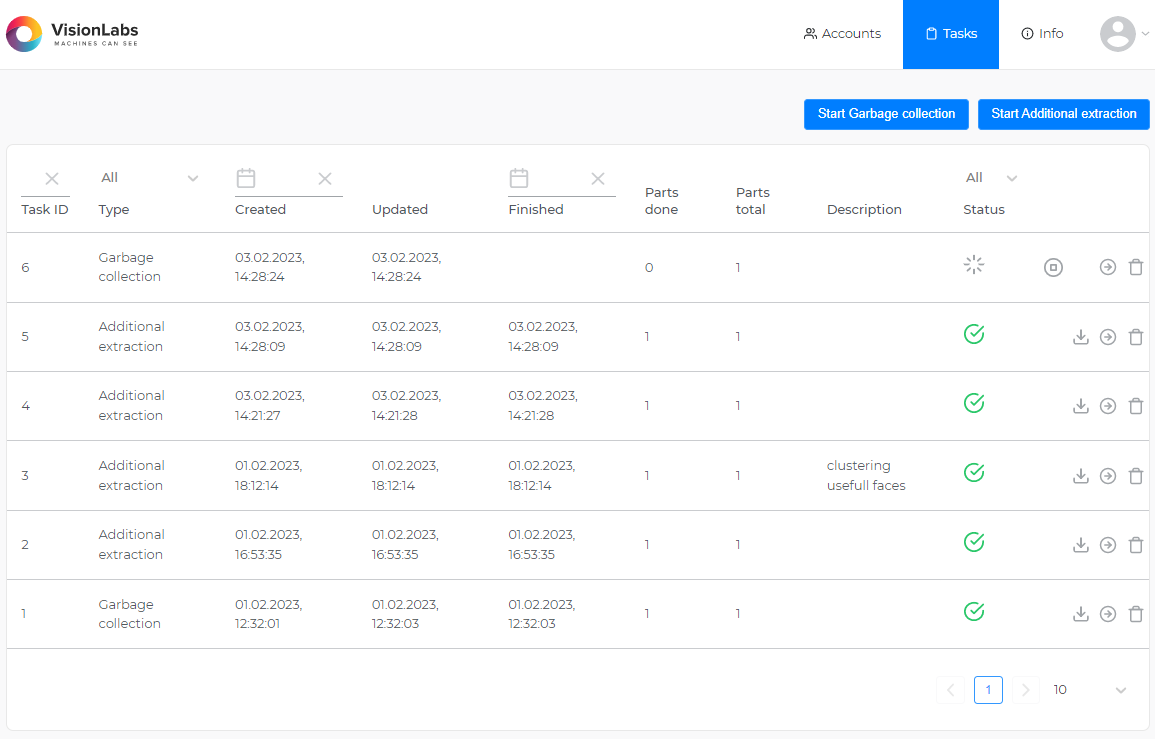

Tasks service#

The Tasks service is used for long tasks processing.

General information about tasks#

As tasks processing takes time, the task ID is returned in the response to the task creation.

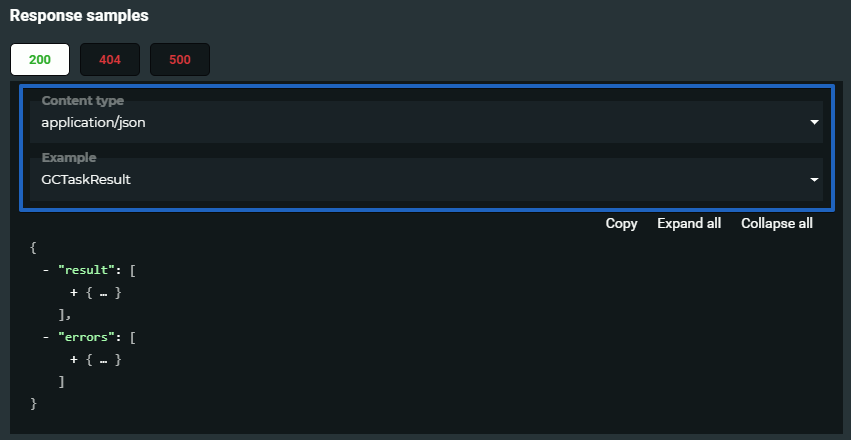

After the task processing is finished, you can receive the task results using the "task " > "get task result" request. You should specify the task ID to receive its results.

You can find the examples of tasks processing results in the response section of "task " > "get task result" request. You should select the task type in the Response samples section of documentation.

You should make sure that the task was finished before requesting its results:

- You can check the task status by specifying the task ID in the "tasks" > "get task" request. There are the following task statuses:

| Tasks status | Value |

|---|---|

| pending | 0 |

| in progress | 1 |

| cancelled | 2 |

| failed | 3 |

| collect results | 4 |

| done | 5 |

- You can receive information about all the tasks using the "tasks" > "get tasks" request. You can set filter to receive information about tasks of interest only.

Clustering task#

As the result of the task a cluster with objects selected according to the specified filters for faces or events is created. Objects corresponding to all of the filters will be added to the cluster. Available filters depend on the object type: events or faces.

You can receive the task status or result using additional requests (see the "General information about tasks").

You can use the reporter task to receive the report about objects added to clusters.

Clustering is performed in several steps:

-

Objects with descriptors are collected according to provided filters

-

Every object is matched with all the other objects

-

Create clusters as groups of "connected components" from the similarity graph.

Here "connected" means that similarity is greater than provided threshold or default "DEFAULT_CLUSTERING_THRESHOLD" from the config.

- If needed, download existing images corresponding to each object: avatar for a face, first sample for an event.

As a result of the task an array of clusters is returned. A cluster includes IDs of objects (faces or events) whose similarity is greater then the specified threshold. You can use the information for further data analysis.

{

"errors": [],

"result": {

"clusters": [

[

"6c721b90-f5a0-409a-ab70-bc339a70184c"

],

[

"8bc6e8df-410b-4065-b592-abc5f0432a1c"

],

[

"e4e3fc66-53b4-448c-9c88-f430c00cb7ea"

],

[

"02a3a1c4-93d7-4b69-99ec-21d5ef23852e",

"144244cb-e10e-478c-bdac-18cd2eb27ee6",

"1f4cdbcb-7b1e-40cc-873b-3ff7fa6a6cf0"

]

],

"total_objects": 6,

"total_clusters": 4

}

}

The clustering task result can also include information about errors occurred during the objects processing.

For such a task, you can create a schedule.

Reporter task#

As a result of the task, the report on the clustering task is created. You can select data that should be added to the report. The report has CSV format.

You can receive the task status or result using additional requests (see the "General information about tasks").

You should specify the clustering task ID and the columns that should be added to the report. The selected columns correspond to the general events and faces fields.

Make sure that the selected columns correspond to the objects selected in the clustering task.

You can also receive the images for all the objects in clusters if they are available.

Exporter task#

The task enables you to collect event and/or face data and export them from LP to a CSV file. The file rows represent requested objects and corresponding samples (if they were requested).

This task uses memory when collecting data. So, its possible that Tasks Worker will be killed by OOM (Out-Of-Memory) killer if you request a lot of data.

You can export event or face data using the "/tasks/exporter" request. You should specify what type of object is required by setting objects_type parameter when creating a request. You can also narrow your request by providing filters for faces and events objects. See the "exporter task" request in the API service reference manual.

As a result of the task a zip archive containing a CSV file is returned.

You can receive the task status or result using additional requests (see the "General information about tasks").

When executing the Exporter task with a large number of faces in the Faces database (for example, 90,000,000 faces), the execution time of requests to the Faces service can be significantly increased. To speed up request execution, you can set the PostgreSQL setting "parallel_setup_cost" to

500. However, be aware that changing this setting may have other consequences, so you should be careful when changing the setting.For such a task, you can create a schedule.

Cross-matching task#

When the task is performed, all the references are matched with all the candidates. References and candidates are set using filters for faces and events.

Matching is performed only for objects that contain extracted descriptors.

You can specify the maximum number of matching candidates returned for every match using the limit field.

You can set a threshold to specify the minimal acceptable value of similarity. If the similarity of two descriptors is lower then the specified value, the matching result will be ignored and not returned in the response. References without matches with any candidates are also ignored.

Cross-matching is performed in several steps:

- collect objects having descriptors using provided filters

- match every reference object with every candidate object

- match results are sorted (lexicographically) and cropped (limit and threshold are applied)

You can receive the task status or results using additional requests (see the "General information about tasks").

As a result an array is returned. Each element of the array includes a reference and top similar candidates for it. Information about errors occurred during the task execution is also returned in the response.

{

"result": [

{

"reference_id": "e99d42df-6859-4ab7-98d4-dafd18f47f30",

"candidates": [

{

"candidate_id": "93de0ea1-0d21-4b67-8f3f-d871c159b740",

"similarity": 0.548252

},

{

"candidate_id": "54860fc6-c726-4521-9c7f-3fa354983e02",

"similarity": 0.62344

}

]

},

{

"reference_id": "345af6e3-625b-4f09-a54c-3be4c834780d",

"candidates": [

{

"candidate_id": "6ade1494-1138-49ac-bfd3-29e9f5027240",

"similarity": 0.7123213

},

{

"candidate_id": "e0e3c474-9099-4fad-ac61-d892cd6688bf",

"similarity": 0.9543

}

]

}

],

"errors": [

{

"error_id": 10,

"task_id": 123,

"subtask_id": 5,

"error_code": 0,

"description": "Faces not found",

"detail": "One or more faces not found, including face with id '8f4f0070-c464-460b-bf78-fac225df72e9'",

"additional_info": "8f4f0070-c464-460b-bf78-fac225df72e9",

"error_time": "2018-08-11T09:11:41.674Z"

}

]

}

For such a task, you can create a schedule.

Linker task#

The task enables you to attach faces to lists according to the specified filters.

You can specify creation of a new list or specify the already existing list in the requests.

You can specify filters for faces or events to perform the task. When an event is specified for linking to list a new face is created based on the event.

If the create_time_lt filter is not specified, it will be set to the current time.

As the result of the task you receive IDs of faces linked to the list.

You can receive the task status or result using additional requests (see the "General information about tasks").

Task execution process for faces:

- A list is created (if create_list parameter is set to 1) or the specified

list_idexistence is checked. - Face ID boundaries are received. Then one or several subtasks are created with about 1000 face ids per each. The number depends on face ID spreading.

-

For each subtask:

- Face IDs are received. They are specified for the current subtask by filters in the subtask content.

- The request is sent to the Luna Faces to link specified faces to the specified list.

- The result for each subtask is saved to the Image Store service.

-

After the last subtask is finished, the worker collects results of all the subtasks, merges them and puts them to the Image Store service (as task result).

Task execution process for events:

- A list is created (if create_list parameter is set to 1) or the specified

list_idexistence is checked. - Events page numbers are received. Then one or several subtasks are created.

-

For each subtask:

- Event with their descriptors are received from the Events service.

- Faces are created using the Faces service. Attribute(s) and sample(s) are added to the faces.

- The request is sent to the Luna Faces to link specified faces to the specified list.

- The result for each subtask is saved to the Image Store service.

-

After the last subtask is finished, the worker collects results of all the subtasks, merges them and puts them to the Image Store service (as task result).

For such a task, you can create a schedule.

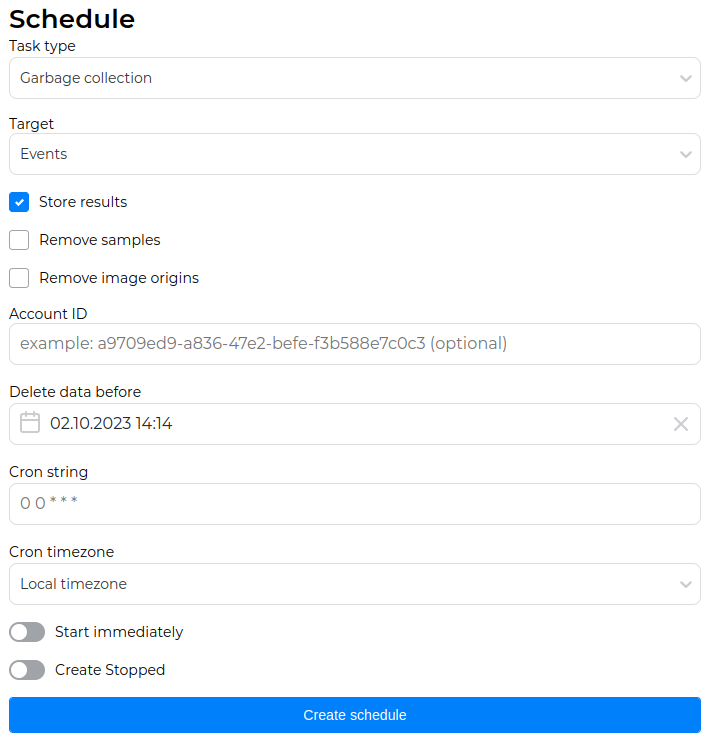

Garbage collection task#

During the task processing, faces, events or descriptors can be deleted.

- When descriptors are set as a GC target, you should specify the descriptor version. All the descriptors of the specified version will be deleted.

- When events are set as a GC target, you should specify one or several of the following parameters:

- Account ID.

- Upper excluded boundary of event creation time.

- Upper excluded boundary of the event appearance in the video stream.

- Handler ID used for the event creation.

- When faces are set as a GC target, you should specify one or several of the following parameters:

- Upper excluded boundary of face creation time.

- Lower included boundary of face creation time.

- User data.

- List ID.

If necessary, you can delete samples along with faces or events. You can also delete image origins for events.

Garbage collection task with faces or events set as the target can be processed using the API service API, while the Admin or Task services API can be used to set faces, events and descriptors as the target. Thus the specified objects will be deleted for all the existing accounts.

You can receive the task status or result using additional requests (see the "General information about tasks").

For such a task, you can create a schedule.

Additional extraction task#

The Additional extraction task re-extracts descriptors extracted using the previous neural network model using a new version of the neural network. This enables you to save previously used descriptors when updating the neural network model. If there is no need to use the old descriptors, then you can not perform this task and only update the neural network model in the Configurator settings.

This section describes how to work with the Additional extraction task. See detailed information about neural networks, the process of updating a neural network to a new model and relevant examples in the "Neural networks" section.

Re-extraction can be performed for face and event objects. You can re-extract the descriptors of faces, descriptors of bodies (for events) or basic attributes if they were not extracted earlier.

The samples for descriptors should be stored for the task execution. If any descriptors do not have source samples, they cannot be updated to a new NN version.

The re-extraction tasks are used for the update to a new neural network for descriptors extraction. All the descriptors of the previous version will be re-extracted using a new NN.

It is highly recommended not to perform any requests changing the state of databases during the descriptor version updates. It can lead to data loss.

Create backups of LP databases and the Image Store storage before launching the additional extraction task.

When processing the task, a new neural network descriptor is extracted for each object (face or event) whose descriptor version matches the version specified in the "DEFAULT_FACE_DESCRIPTOR_VERSION" (for faces) or "DEFAULT_HUMAN_DESCRIPTOR_VERSION" (for bodies) settings. Descriptors whose version does not match the version specified in these settings are not re-extracted. They can be removed using the Garbage collection task.

Request to the Admin service

You need to make a request to the "additional_extract" resource, specifying the following parameters in the request body:

- "content" > "extraction_target" – Face descriptors, body descriptors, basic attributes.

- "content" > "options" > "descriptor_version" – New neural network version (not applicable for basic attributes).

- "content" > "filters" > "object_type" – Faces or events.

If necessary, you can additionally filter the object type by "account_id", "face_id__lt", etc.

See the "create additional extract task" request in the Admin service OpenAPI specification for more information.

You can receive the task status or result using additional requests (see the "General information about tasks").

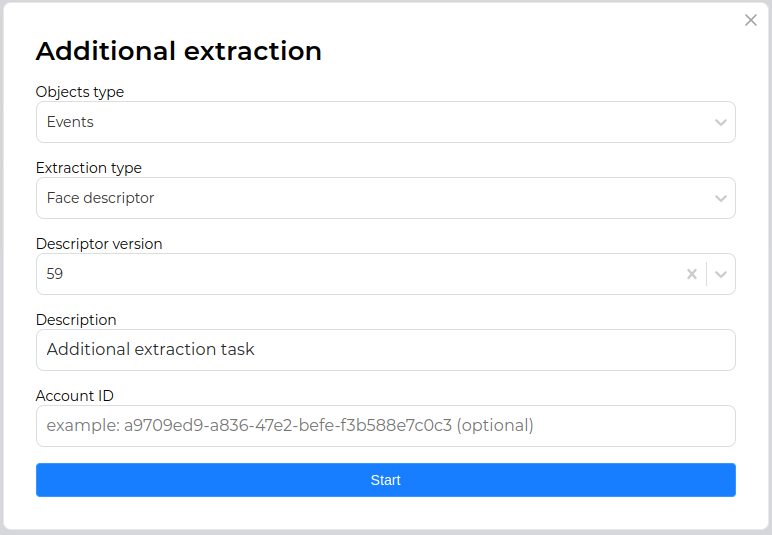

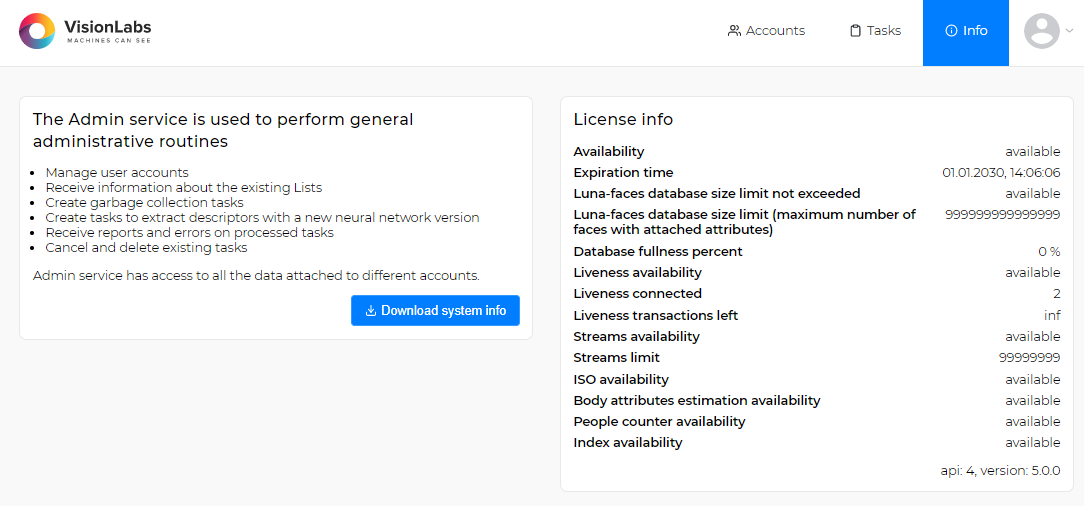

Admin user interface

You need to do the following:

-

Go to the Admin user interface:

http://<admin_server_ip>:5010/tasks. -

Run the additional extraction task using the corresponding button.

-

In the window that appears, set the object type (face or event), the extraction type (face descriptor, body descriptor or basic attributes), new neural network model (not applicable for basic attributes) and click "Start", confirming the start of the task.

If necessary, you can additionally filter the object type by "account_id".

See the detailed information about the Admin user interface in the "Admin user interface" section.

For such a task, you can create a schedule.

ROC-curve calculating task#

As a result of the task, the Receiver Operating Characteristic curve with TPR (True Positive Rate) against the FPR (False Positive Rate) is created.

See additional information about ROC-curve creation in "TasksDevelopmentManual".

ROC calculation task

ROC (or Receiver Operating Characteristic) is a performance measurement for classification tasks at various thresholds settings. The ROC-curve is plotted with TPR (True Positive Rate) against the FPR (False Positive Rate). TPR is a true positive match pair count divided by a count of total expected positive match pairs, and FPR is a false positive match pair count divided by a count of total expected negative match pairs. Each point (FPR, TPR) of the ROC-cure corresponds to a certain similarity threshold. See more at wiki.

Using ROC the model performance is determined by looking at:

- Area under the ROC-curve (or AUC).

- Type I and type II error rates equal point, i.e. the ROC-curve and the secondary main diagonal intersection point.

The model performance also determined by hit into the top-N probability, i.e. probability of hit a positive match pair into the top-N for any match result group sorted by similarity.

It requires "markup" to make a ROC task. One can optionally specify "threshold_hit_top" (default 0) to calculate hit into the top-N probability, the match "limit" (default 5), "key_FPRs" — list of key FPR values to calculate ROC-curve key points, and "filters" with "account_id". Also, it needs "account_id" for task creation.

You can receive the task status or result using additional requests (see the "General information about tasks").

Markup

Markup is expected in the following format:

[{'face_id': <face_id>, 'label': <label>}]

Label (or group id) can be a number or any string.

Example:

[{'face_id': '94ae2c69-277a-4e46-817d-543f7d3446e2', 'label': 0},

{'face_id': 'cd6b52be-cdc1-40a8-938b-a97a1f77d196', 'label': 1},

{'face_id': 'cb9bda07-8e95-4d71-98ee-5905a36ec74a', 'label': 2},

{'face_id': '4e5e32bb-113d-4c22-ac7f-8f6b48736378', 'label': 3},

{'face_id': 'c43c0c0f-1368-41c0-b51c-f78a96672900', 'label': 2}]

For such a task, you can create a schedule.

Estimator task#

The estimator task enables you to perform batch processing of images using the specified policies.

As a result of the task performing, JSON is returned with data for each of the processed images and information about the errors that have occurred.

In the request body, you can specify the handler_id of an already existing static or dynamic handler. For the dynamic handler_id, the ability to set the required policies is available. In addition, you can create a static handler specifying policies in the request.

The resource can accept five types of sources with images for processing:

- ZIP archive

- S3-like storage

- Network disk

- FTP server

- Samba network file system

To obtain correct results of image processing using the Estimator task, all processed images should be either in the source format or in the format of samples. The type of transferred images is specified in the request in the "image_type" parameter.

For such a task, you can create a schedule. When creating a schedule, it is not possible to specify a ZIP archive as an image source.

ZIP archive as image source of estimator task

The resource accepts for processing a link to a ZIP archive with images. The size of the archive is set using the "ARCHIVE_MAX_SIZE" parameter in the "config.py" configuration file of the Tasks service. The default size is 100 GB. An external URL or the URL to an archive saved in the Image Store can be used as a link to the archive. In the second case, the archive should first be saved to the LP using a POST request to the "/objects" resource.

When using an external URL, the ZIP archive is first downloaded to the Tasks Worker container storage, where the images are unpacked and processed. After the end of the task, the archive is deleted from the repository along with the unpacked images.

It is necessary to take into account the availability of free space for the above actions.

The archive can be password protected. The password can be passed in the request using the "authorization" -> "password" parameter.

S3-like storage as image source of estimator task

The following parameters can be set for this type of source:

- "bucket_name" — Bucket name/Access Point ARN/Outpost ARN (required).

- "endpoint" — Storage endpoint (only when specifying the bucket name).

- "region" — Bucket region (only when specifying the bucket name).

- "prefix" — File key prefix. It can also be used to load images from a specific folder, such as "2022/January".

The following parameters are used to configure authorization:

- Public access key (required)

- Secret access key (required)

- Authorization signature version ("s3v2"/"s3v4")

It is also possible to recursively download images from nested bucket folders and save original images.

For more information about working with S3-like repositories, see AWS User Guide.

Network disk as image source of estimator task

The following parameters can be set for this type of source:

- "path" — Absolute path to the directory with images in the container (required).

- "follow_links" — Enables/disables symbolic link processing.

- "prefix" — File key prefix.

- "postfix" — File key postfix.

See an example of using prefixes and postfixes in the "/tasks/estimator" resource description.

When using a network disk as an image source and launching Tasks and Tasks Worker services through Docker containers, it is necessary to mount the directory with images from the network disk to the local directory and synchronize it with the specified directory in the container. You can mount a directory from a network disk in any convenient way. After that, you can synchronize the mounted directory with the directory in the container using the following command when launching the Tasks and Tasks Worker services:

docker run \

...

-v /var/lib/luna/current/images:/srv/images

...

Here:

/var/lib/luna/current/images— Path to the previously mounted directory with images from the network disk./srv/images- Path to the directory with the images in the container where they will be moved from the network disk. This path should be specified in the request body of the Estimator task (the "path" parameter).

As for S3-like storage, the ability to recursively download images from nested bucket folders is available.

FTP server as image source of estimator task

For this type of source, the following parameters can be set in the request body for connecting to the FTP server:

- "host" — FTP server IP address or hostname (required).

- "port" — FTP server port.

- "max_sessions" — Maximum number of allowed sessions on the FTP server.

- "user", "password" — Authorization parameters (required).

As in Estimator tasks using S3-like storage or network disk as image sources, it is possible to set the path to the directory with images, recursively receive images from nested directories, select the type of transferred images, and specify the prefix and postfix.

See an example of using prefixes and postfixes in the "/tasks/estimator" resource description.

Samba as image source of estimator task

For this type of source, the parameters are similar to those of an FTP server, except for the "max_sessions" parameter. Also, if authorization data is not specified, the connection to Samba will be performed as a guest.

Task processing#

The Tasks service includes the Tasks service and Tasks workers. Tasks receives requests to the Tasks service, creates tasks in the DB and sends subtasks to Tasks workers. The workers are implemented as a separate Tasks Worker container. Tasks workers receive subtasks and perform all the required requests to other services to solve the subtasks.

The general approach for working with tasks is listed below.

- User sends the request for creation of a new task.

- Tasks service creates a new task and sends subtasks to workers.

- Tasks workers process subtasks and create reports.

- If several workers have processed subtasks and have created several reports, the worker, which finished the last subtask, gathers all the reports and creates a single report.

- When the task is finished, the last worker updated its status in the Tasks database.

- User can send requests to receive information about tasks and subtasks and number of active tasks. The user can cancel or delete tasks.

- User can receive information about errors that occurred during execution of the tasks.

- After the task is finished the user can send a request to receive results of the task.

See the "Tasks diagrams" section for details about tasks processing.

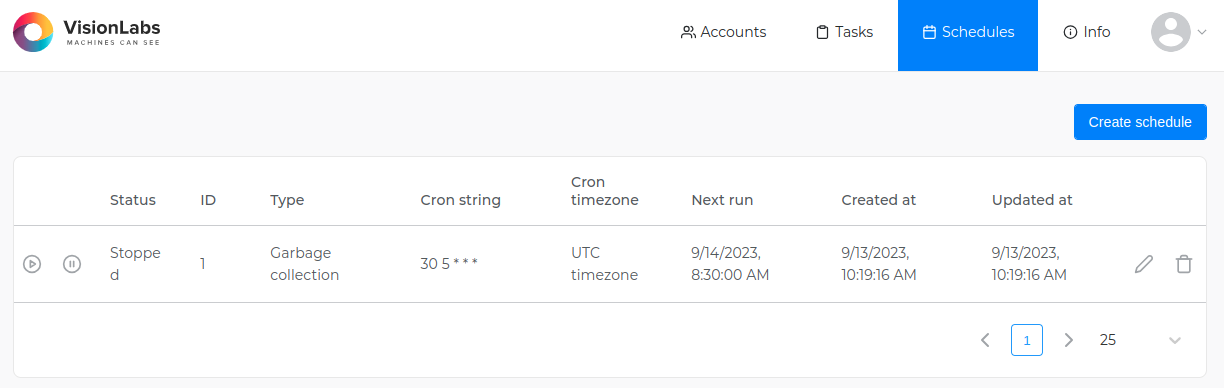

Running scheduled tasks#

In LUNA PLATFORM, it is possible to set a schedule for Garbage collection, Clusterization, Exporter, Linker, Estimator, Additional extract, Cross-matching and Roc-curve calculating tasks.

To use a filter relative to the current time ("now-time"), the current time will be counted not from the creation of the schedule, but from the creation of the task by the schedule in accordance with the cron expression. See "Now-time filters" for details.

The schedule is created using the request "create tasks schedule" to the API service, which specifies the contents of the task being created and the time interval for its launch. To specify the time interval, Cron expressions are used.

Cron expressions are used to determine the task execution schedule. They consist of five fields separated by spaces. Each field defines a specific time interval in which the task should be completed. The week number starts from Sunday.

For tasks that can only be performed using the Admin service (for example, the task of removing some objects using the GC task), you can assign a schedule only in the Admin service.

In response to the request, a "schedule_id" is issued, which can be used to get information about the status of the task, the time of the next task, etc. (requests "get tasks schedule and "get tasks schedules). The id and all additional information are stored in the "schedule" table of the Tasks database.

If necessary, you can create a delayed schedule, and then activate it using the "action" = "start" parameter of the "patch tasks schedule" request. Similarly, you can stop the scheduled task using "action" = "stop". To delete a schedule, you can use the "delete tasks schedule" request.

Permissions to work with schedules are specified in the token with the "task" permission. This means that if the user has permission to work with tasks, then he will also be able to use the schedule.

The possibility of schedule creation is also available for Lambda tasks.

Examples of Cron expressions#

This section describes various examples of Cron expressions.

- Run the task every day at 3 a.m.:

0 3 * * *

- Run the task every Friday at 18:30:

30 18 * * 5

- Run the task every first day of the month at noon:

0 12 1 * *

- Run the task every 15 minutes:

*/15 * * * *

- Run the task every morning at 8:00, except weekends (Saturday and Sunday):

0 8 * * 1-5

- Run the task at 9:00 am on the first and 15th day of each month, but only if it is Monday:

0 9 1,15 * 1

Send notification about task and subtask status changes#

If necessary, you can send notifications about changes in task and subtask status using the callback mechanism. Callbacks allow you to send data to a third-party system at a specified URL or to Telegram. To configure notifications, you need to configure the "notification_policy" in the request parameters of the corresponding task.

You can also configure sending notifications for tasks and subtask in the schedule settings.

If necessary, you can obtain information about the current state of the notification policy or change some policy data using "get task notification policy" and "replace task notification policy" requests.

Additional protection for passwords and tokens#

Passwords and tokens passed in Estimator task and in "notification_policy" can be additionally encrypted. To do this, pass custom values to the FERNET_PASSPHRASE and SALT environment variables when starting the Tasks service container.

FERNET_PASSPHRASE is the password or key used to encrypt data using the Fernet algorithm.

SALT is a random string added to the password before it is hashed.

Fernet is a symmetric encryption algorithm that provides authentication and data integrity as well as confidentiality. With this algorithm, the same key is used to encrypt and decrypt data.

Salt is added to make it more difficult to crack the password by brute force. Each time a password is hashed, a unique string is used, making identical passwords hashed differently. This increases the security of the system, especially if users have the same passwords.

Example of a container startup command passing environment variables:

docker run \

--env=CONFIGURATOR_HOST=127.0.0.1 \

--env=FERNET_PASSPHRASE=security_passphrase.

--env=SALT=salt_for_passwords_and_tokens.

...

Important: When the container is started with the above environment variables specified, the old passwords and tokens will no longer work. Additional migration steps must be performed (see section below).

Add encryption when updating#